By continuing to browse, you accept the use of cookies. To learn more about the use of cookies and our approach to data privacy, click here.

Blog Post

March 24, 2026

By

Jacques Foul

7 Common Mistakes Teams Make When Using AI at Work

We've trained tens of teams to adopt AI more effectively and we keep seeing the same mistakes.

We've trained tens of teams to adopt AI more effectively and we keep seeing the same mistakes.

The problem isn't the technology

Most AI adoption failures come from how teams approach tools: too fast, too unstructured, and with too little investment in the human side of the equation.

The mistakes below are patterns we see consistently when working with communications, public affairs, and advocacy teams who are somewhere in the middle of their AI journey. Some are obvious in hindsight. Others are easy to rationalise until something goes wrong.

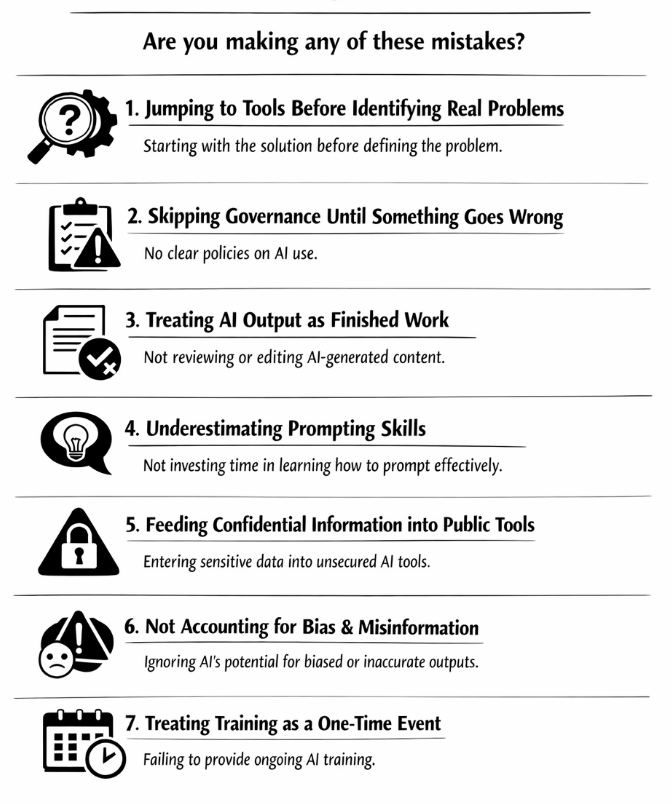

1. Jumping to tools before identifying real problems

The most common mistake is also the first one teams make. Someone sees a demo, reads a headline or hears a competitor mention a new tool, and suddenly the team is trialling software before anyone has asked what problem it's supposed to solve.

Hype-driven adoption skips the step that matters most: mapping your actual workflow pain points. Where is your team losing time? Where is quality inconsistent? Where is there genuine overload, like briefing production, monitoring or first-draft reporting that AI could meaningfully reduce? Without that foundation, tool adoption becomes a distraction rather than an improvement. Most of our clients who come to us frustrated with AI have this in common: they started with the solution and never properly defined the problem.

2. Skipping governance until something goes wrong

No policy means no rules. No rules means people making individual decisions about which tools to use, what data to feed into them, and how to handle the output. That works fine until it doesn't, and when it doesn't, the consequences can be serious: data leaks, regulatory breaches, reputational damage, and in some contexts, significant fines under frameworks like the EU AI Act.

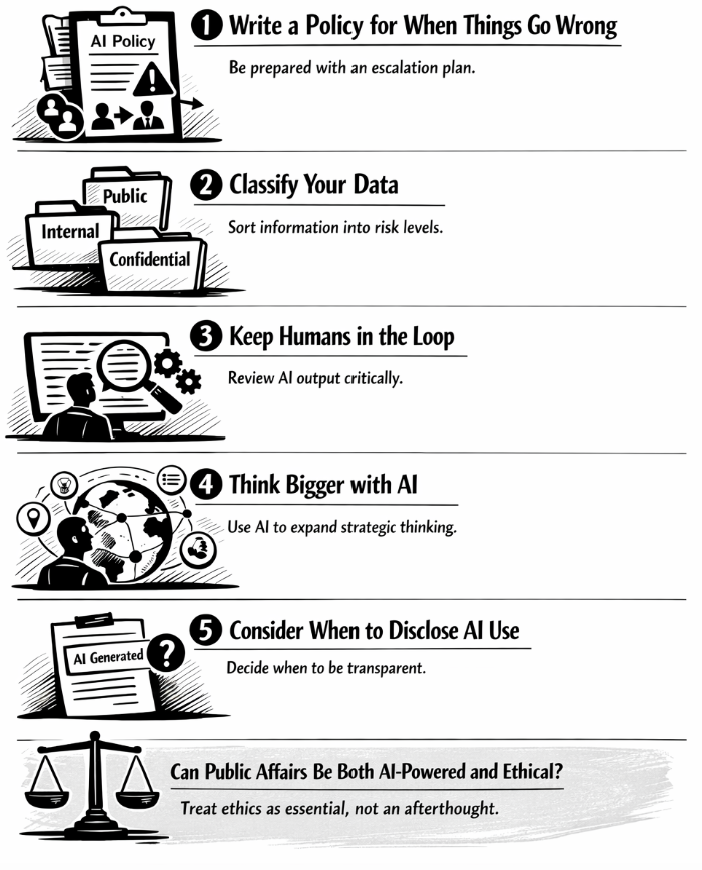

Governance doesn't need to be bureaucratic to be effective. A clear, readable policy that covers approved tools, data handling rules, disclosure requirements, and hard bans takes less time to write than most teams assume.

3. Treating AI output as finished work

AI can produce well-structured text that can also be factually wrong, politically tone-deaf, or subtly misleading. In policy communications, that combination is particularly dangerous. A fabricated legislative reference, a misattributed institutional position or an oversimplified framing of a sensitive issue can weaken credibility fast.

The teams that get into trouble here are usually busy. Under time pressure, the gap between "AI drafted this" and "this is ready to send" collapses. The key here is to build mandatory human review into the workflow to make AI use responsible. In our trainings, we make this explicit: AI produces the draft, a human owns the output.

4. Underestimating how much prompting skill matters

A vague prompt returns an answer that sounds plausible but lacks the precision, context, and constraints the task actually required. Teams then conclude that AI isn't useful for their work, when the real issue is that no one taught them how to ask properly.

Prompting is a learnable skill. It requires practice, feedback, and iteration on real tasks. What we've seen working with teams across the EU is that the gap between a team that finds AI genuinely useful and one that finds it frustrating almost always comes down to prompting quality.

5. Feeding confidential information into public tools

This one ends careers and breaches contracts (we’ve seen it with the recent Deloitte case in Australia). Confidential advocacy strategies, unpublished policy positions, personal data, legally sensitive communications: all of it has found its way into public-facing AI tools at organisations that hadn't established clear rules about what could and couldn't be entered.

Public LLMs are not secure environments. Data entered into them can be used for model training, accessed by others, or simply exist outside your organisation's control from the moment you paste it in. The rule is simple and non-negotiable: if the information is confidential, it does not go into a public AI tool. Full stop. If your team doesn't have this written down somewhere official, that's the first thing to fix.

6. Not accounting for bias and misinformation risks

AI models reflect the data they were trained on. That data contains biases, gaps, outdated information, and skewed representations of certain communities, institutions, and political contexts. In public affairs and policy communications, where narratives carry real consequences, acting on biased or inaccurate AI output without scrutiny is a genuine risk.

A campaign message built on a skewed AI framing of a social issue, a briefing that misrepresents a minority stakeholder group's position, a monitoring summary that systematically underweights certain sources: each of these can generate public backlash, damage relationships, and undermine the credibility your team has spent years building. Critical review of AI includes catching factual errors and the subtler distortions that a rushed reader won't notice until it's too late.

7. Treating training as a one-time event

The AI landscape is constantly changing. Tools, prompting techniques, and regulatory requirements have all shifted in the past six months. A team that received AI training twelve months ago and hasn’t revisited the topic since is now operating on outdated foundations.

In our trainings, we consistently see that the teams making the most effective use of AI are the ones that have built regular learning into their working rhythm: short experiments, shared findings and regular check-ins on what's changed.

Is your team making any of these mistakes?

Probably at least one. We’re not here to criticise anyone. This is the reality for most organisations navigating AI adoption without a clear roadmap. The good news is that none of these mistakes is irreversible. Most of them are fixed by the same thing: stepping back, establishing a proper foundation and investing in the skills your team actually needs.

If you want to audit where your team stands and build the capability to use AI well, we offer practical workshops designed for communications and public affairs professionals who are ready to move beyond trial and error.