By continuing to browse, you accept the use of cookies. To learn more about the use of cookies and our approach to data privacy, click here.

Blog Post

March 24, 2026

By

András Baneth

How to Build an AI-Ready Communication Team in 2026

Building an AI-ready communication team in 2026 takes more than adopting the right tools. It takes the right structure, governance and skills to make it last.

Building an AI-ready communication team in 2026 takes more than adopting the right tools. It takes the right structure, governance and skills to make it last.

Why most communication teams aren't ready

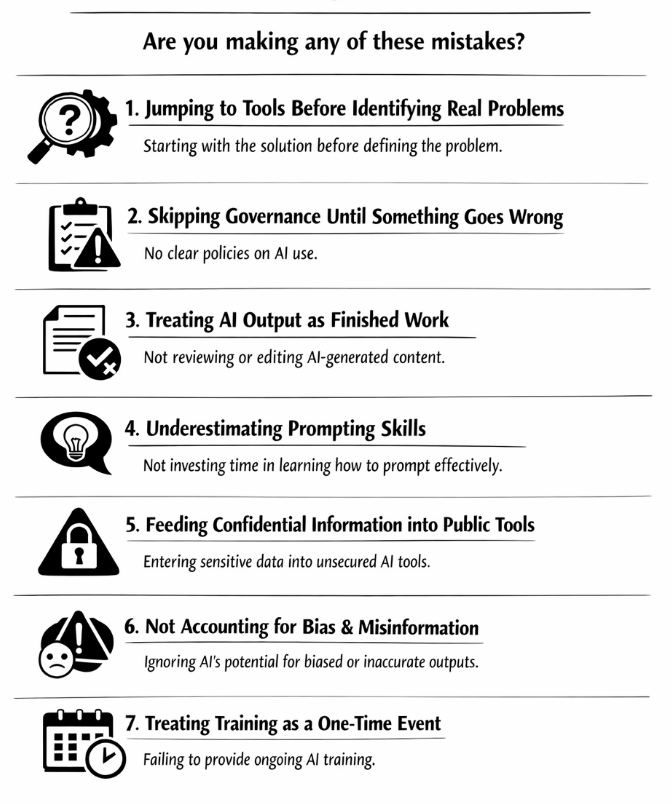

AI adoption in communication is moving faster than most teams' ability to keep up with it. People are already using it to draft press releases, monitor media updates and summarise briefings. But most teams skip the foundation and jump straight to tools. That's how you end up with a colleague pasting a confidential stakeholder strategy into a public LLM or an AI-drafted statement going out without a human ever reading it critically. In our trainings, this is the pattern we see most consistently: teams that adopt AI tools too fast.

1. Map your workflows before you touch a tool

Before any AI decision, sit down with your team and map every core process: drafting, media monitoring, stakeholder reporting, translation, internal comms. For each one, ask two questions. Where could AI accelerate this? And where could it cause damage?

A drafting workflow is a strong candidate for AI support. A workflow involving confidential legal positions or unpublished financial data is a high-risk zone where AI assistance needs strict controls. Most of our clients admit they've never done it. It's the single most valuable thing you can do before starting to use a new AI tool.

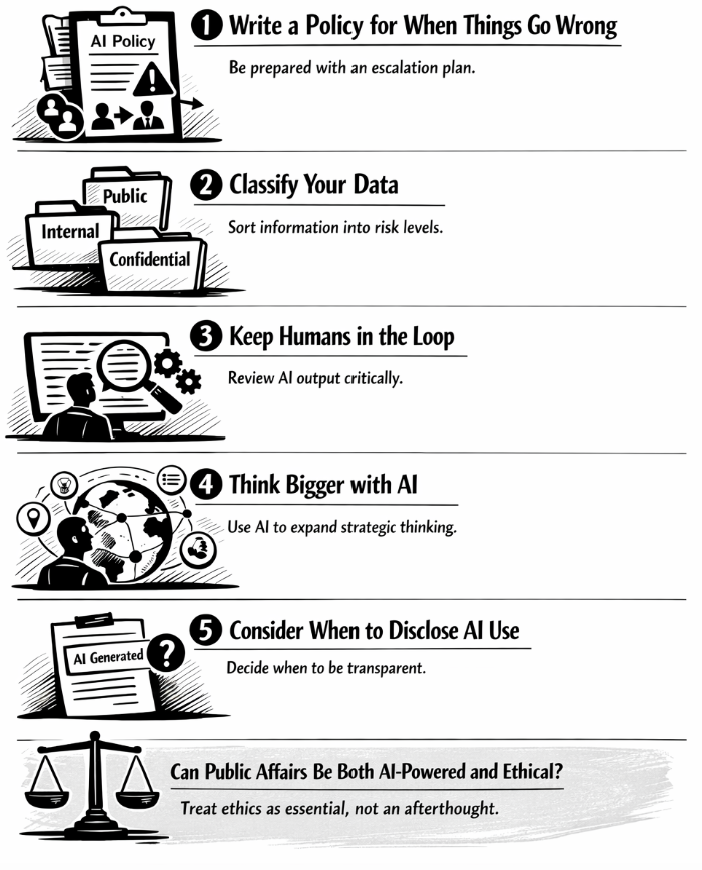

2. Write your AI policy before someone breaks a rule

An AI policy is often seen as a legal document for the shelf. It's actually an operational guide your team needs to review before using an AI tool. It should answer five things clearly:

- Which tools are approved

- What data can and cannot be fed into them

- Disclosure rules for AI-assisted output

- Ethical red lines

- What happens when a mistake is made

Build in one hard rule from day one: no confidential information or unpublished positions go into any public-facing LLM. Keep the policy short enough to read in ten minutes. If it needs a lawyer to decode it, it won't be followed.

3. Build a cross-functional group to own it

AI policy written by comms alone will have blind spots. You need a small working group (communications, legal, IT, and ideally someone thinking about ethics) that co-owns the policy, approves new tools, runs quarterly audits, and gathers questions from the team.

This group doesn't need to meet weekly. It needs to exist, have clear ownership, and have authority to say no. Without it, policy drifts and accountability disappears.

4. Get leadership buy-in with numbers, not enthusiasm

Leadership support for AI initiatives dies when it's based on vague promises about transformation. Anchor your case in specifics. Quantify what's at stake: time saved, cost per output and error rates. Be equally honest about the risks, including reputational exposure, regulatory liability under the EU AI Act, and data security gaps.

Most of our clients find that honest risk framing accelerates sign-off faster than optimistic pitches. Leaders are more likely to believe people who've thought it through.

5. Run a prompt bootcamp before anyone uses AI on real work

This is the step most teams skip and it's the one that makes everything else work. Before your team uses AI, they need to know how to prompt well.

That means hands-on workshops where participants craft prompts live, test them on real tasks, and benchmark AI output against their manual baseline. In our trainings, we've found that even experienced communicators significantly underuse the tools because they're prompting too broadly. One structured session built around real tasks can change that.

6. Pilot 3 to 5 tools on low-risk tasks, then cut fast

Don't evaluate tools in a vacuum. Run structured pilots on low-stakes internal tasks: internal newsletters, first-draft summaries or monitoring updates. Test 3 to 5 options simultaneously against the same criteria: output quality, data security, ease of use, cost, and compliance with your policy.

Set a clear kill criterion upfront. If a tool fails to meet minimum quality or security thresholds within the pilot window, cut it. We’ve found that most pilots drag on because no one has defined what failure looks like.

7. Block team time for experimentation

AI capabilities are changing faster than any team can track by reading about them. The only way to stay current is structured experimentation. Reserve some of your team’s time for testing new use cases and tools.

You can also pair this with a light monthly report with one win, one failure and one open question. It keeps learning visible and prevents experimentation from becoming invisible hobby work that never improves the team's actual practice.

8. Mandate human review and disclose AI use

All AI output gets reviewed by a human before it goes anywhere external. This should be a professional standard. AI can draft fluently and still be wrong, incomplete, or tonally off in ways that only a human with context will catch.

On disclosure: flag AI involvement in your outputs where you find it relevant. This is increasingly expected by stakeholders and regulators. Getting ahead of it builds trust and being caught hiding it destroys it.

9. Communicate internally or watch resistance build up

The technical work fails without internal buy-in. People resist AI adoption for real reasons: fear of job displacement, uncertainty about quality standards, distrust of new tools. Ignoring those concerns makes them louder.

Run an internal campaign at your organisation with written explainers and manager briefings that answer the why, the how, and the what's in it for your team, clearly and honestly. Create feedback channels and actually respond to what comes through. The teams that handle this well treat internal communication about AI as a change management project, not a memo.

Is there a shortcut to building an AI-ready team?

No, there isn't. Building an AI-ready communication team takes deliberate preparation: policy before tools, skills before deployment and testing before scaling up. What we've seen working with communication teams is that the organisations that move well are the ones that built the foundation first and then accelerated from a position of confidence.

If your team is starting this journey, we offer in-person and online AI workshops designed specifically for communication professionals who need to do this right.