By continuing to browse, you accept the use of cookies. To learn more about the use of cookies and our approach to data privacy, click here.

Blog Post

March 24, 2026

By

András Baneth

How to Develop an Ethical Use of AI in Public Affairs

We work with public affairs teams to help them build AI practices that are not just effective, but ethical and safe. Here is where to start.

We work with public affairs teams to help them build AI practices that are not just effective, but ethical and safe. Here is where to start.

Ethics is one of the key conditions for AI adoption

The public affairs profession runs on trust. Trust from your stakeholders who share sensitive strategic information. Trust from institutions that expect accurate and honest engagement. Trust from the public, which increasingly scrutinises how organisations use technology to shape policy narratives.

AI introduces new ways to break that trust, often quietly and without obvious warning signs, with a confidential position shared with the wrong tool, a biased output acted on without scrutiny or an AI-drafted document presented as entirely human work.

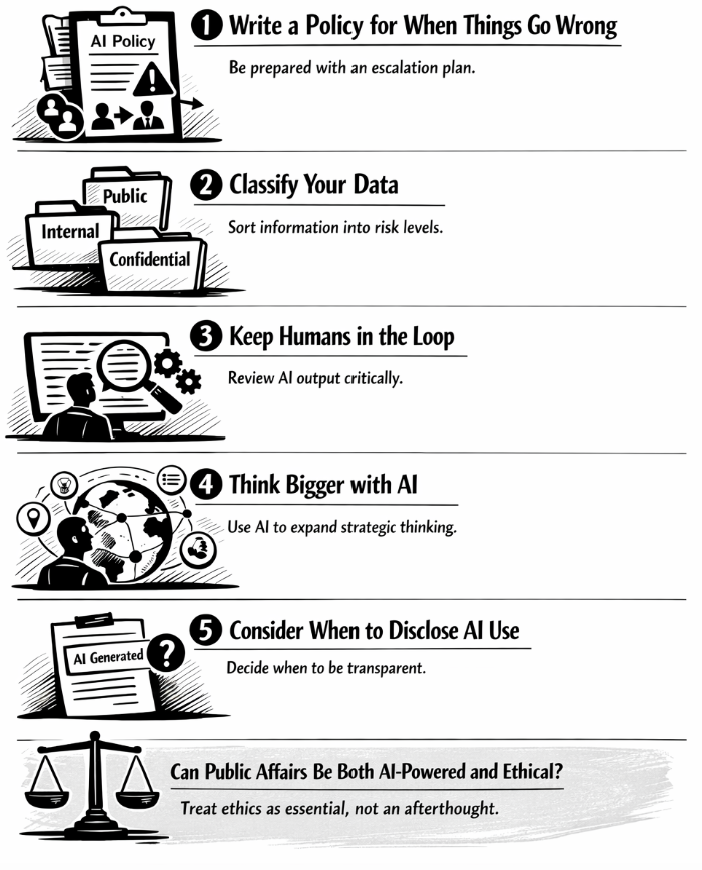

1. Write a policy that covers what happens when things go wrong

Most AI policies focus on what teams are allowed to do. The more important question is what happens when something goes wrong, because something will go wrong at some point.

Your AI policy needs to address these scenarios explicitly: who is responsible, what the escalation path looks like, and how the team responds. It should also be clear about what AI is being used for across the organisation. Use cases like drafting, monitoring and brainstorming carry different risk levels and deserve different treatment, which should be clearly outlined in your policy.

2. Classify your data before you decide what AI can touch

Not all information carries the same risk. Public-facing content, published reports, general research: these can reasonably be worked with in AI tools. Internal documents, strategic plans, unpublished positions, stakeholder relationship notes: these require more caution. Confidential client data, legally sensitive communications, personal information: these should not enter public AI tools under any circumstances.

The practical step is simple: build a data classification framework that your team actually uses. Three tiers work well (public, internal and confidential) with a clear rule attached to each. In our trainings, teams that do this exercise find it clarifying in ways that go beyond AI governance. It forces a conversation about what information is actually sensitive and why, which most organisations have never had explicitly. That conversation is worth having regardless of AI.

3. Keep humans in the loop

Human-in-the-loop practice means applying critical thinking to what AI produces: questioning the framing, checking the sources and asking whether the output reflects the full complexity of the situation or a flattened version of it. When a result looks too clean, too convenient or too aligned with what you wanted to hear, that's the moment to ask for counter-arguments. Prompt your AI model to consider the opposing position. Ask it what an expert would disagree with. Most of our clients find that this habit improves AI output quality as well as their own analytical thinking.

4. Use AI to think bigger, not just to work faster

There is a version of AI adoption that makes teams more productive without making them any more strategic. They draft faster, summarise faster and monitor faster. The work is quicker but the thinking is the same. We see this as a missed opportunity because over time, it creates a dependency: teams that are efficient with AI but less capable without it.

The more valuable use of AI in public affairs is for tasks that expand what's possible, like:

- Using AI to explore scenarios your team wouldn't have had time to map

- Pressure-testing a strategic position from three different stakeholder perspectives

- Identifying regulatory signals across languages and geographies that your monitoring would otherwise miss

5. Assess whether you need to disclose AI use

There is no universal rule about when to disclose AI use in public affairs work. A first draft produced with AI and then substantially rewritten by a human is different from a monitoring report where AI did the heavy lifting and human review was light. Some organisations require disclosure under emerging regulatory frameworks, while others leave it to professional judgment.

We see a clear pattern in our work: organisations in the most heavily regulated sectors, like pharma and life sciences, are also the ones that can least afford an AI-related reputational hit. As a result, it is up to them to set the bar higher than the minimum legal requirements: to be explicit about when and how they use AI, to put real guardrails and human review in place, and to treat transparency as part of their licence to operate rather than a nice-to-have.

Can public affairs be both AI-powered and ethically grounded?

Yes, but it requires treating ethics as a key part of AI use, rather than an empty statement. The teams doing this well are the ones that have thought through the hard scenarios in advance, built clear habits around data handling and human review, and created a culture where people feel confident raising concerns when something doesn't feel right.

If your team is ready to develop an AI practice that is both capable and ethical, we offer workshops designed specifically for public affairs professionals navigating this challenge.